Key takeaways

- Understanding tools and concepts won’t get you hired. The real differentiator is the ability to apply AI to solve business problems and deliver measurable outcomes.

- There is no shortage of AI learners. The shortage is of candidates who can work with real-world complexity—messy data, unclear requirements, and business constraints.

- Students become job-ready when they work on real use cases, use structured problem-solving frameworks, and build end-to-end solutions in realistic environments.

Artificial intelligence is no longer a future trend. It is already reshaping how companies build products, streamline operations, make decisions, and compete. For students, that sounds like a massive opportunity. And it is. But it is also where one of the biggest misconceptions begins.

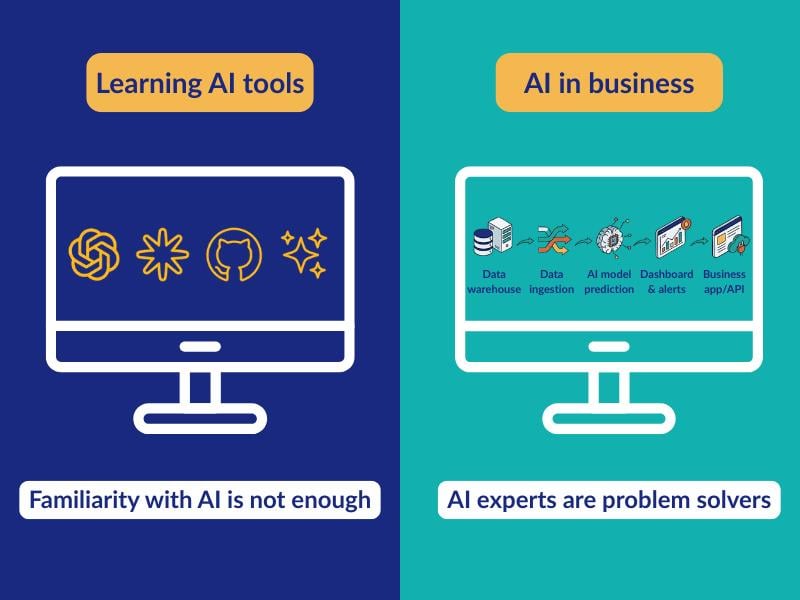

Many students assume that learning AI tools, completing certifications, or building a few academic projects is enough to become job-ready. Employers increasingly expect something else: not just familiarity with AI, but the ability to apply it in real business environments. That is the difference between learning AI and becoming enterprise-ready AI talent.

The career opportunity in AI is too big to ignore. The World Economic Forum says that by 2030, global labor markets could see 170 million new roles created and 92 million displaced, resulting in a net gain of 78 million jobs.

The AI boom is real — but the talent gap is growing

There is no doubt that AI adoption is accelerating across industries. Students are experimenting with generative AI, universities are expanding AI-related programs, and businesses are investing heavily in AI-led transformation. On the surface, it should feel like the talent pipeline is keeping up. It is not.

The market is not short on people who have heard of AI, used AI tools, or completed AI courses. It is short on people who can turn AI capability into measurable business outcomes. Many learners understand the tools and the concepts, but struggle when asked to apply them in a business context.

That gap becomes visible the moment graduates move from classroom-style tasks into enterprise environments. In those settings, problems are rarely neat. Data is incomplete, requirements are vague, stakeholders disagree, timelines are tight, and outcomes matter more than technical elegance. Knowing the language of AI is useful. Knowing how to create value with AI is what makes someone employable.

What AI talent actually means

The term AI talent gets used loosely, and that is part of the problem. For many learners, it is reduced to tool familiarity, prompt writing, or a basic understanding of machine learning concepts. Those things matter, but they do not capture the full picture.

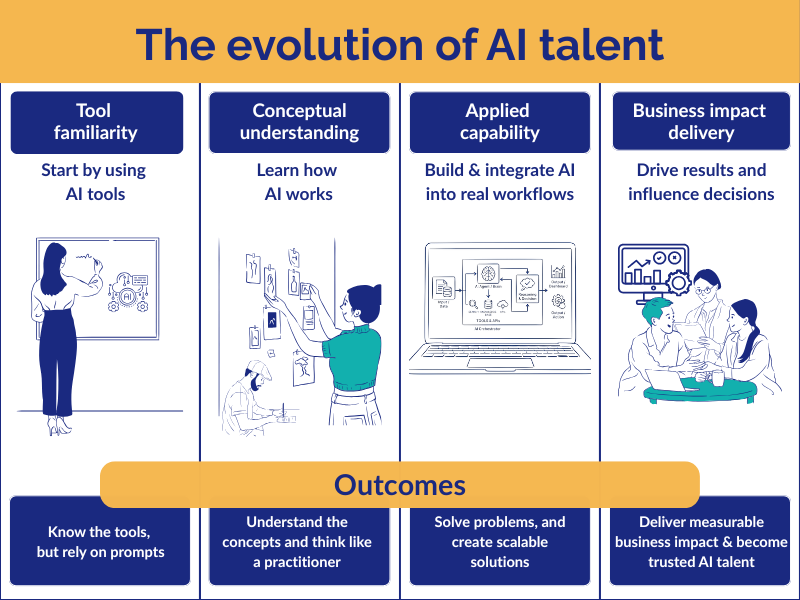

A better way to think about AI talent is in four layers.

The first is tool familiarity: using tools like ChatGPT, model APIs, or AutoML platforms. The second is conceptual understanding: knowing machine learning foundations, NLP, computer vision, or large language models. The third is applied capability: identifying real use cases, working with data, and integrating AI into workflows. The fourth and most valuable layer is business impact delivery: solving business problems, building end-to-end solutions, measuring outcomes, iterating, and scaling what works.

The industry does not hire AI learners. It hires AI problem solvers.

What “enterprise-ready” really means

If AI talent is about capability, enterprise readiness is about context.

Enterprise-ready AI talent is the ability to operate in environments that are complex, ambiguous, large-scale, and outcome-driven. It means being able to work with messy real-world data, translate vague business problems into structured use cases, collaborate across engineering and business teams, and make trade-offs between performance, cost, speed, scalability, and risk.

This is where traditional academic preparation often falls short. In academic settings, students usually solve clearly defined problems with clean datasets and known evaluation metrics. In enterprise settings, the challenge is different. You may have to define the problem before you solve it. You may have to align stakeholders before you build anything. And you may have to prove business value before anyone cares about the model.

That is why enterprise readiness matters so much. It is not a buzzword. It is the difference between knowing AI in theory and applying AI where outcomes, constraints, and accountability are real.

The shift from AI users to AI orchestrators

Another reason this gap is widening is that the nature of AI work itself is changing.

Earlier AI roles often centered on model building, algorithm tuning, and isolated experimentation. Those skills still matter, but enterprise AI is shifting toward something broader: designing workflows, integrating systems, and operationalizing intelligence across business processes.

That is why the move from AI users to AI orchestrators is such a useful way to describe the market. An AI orchestrator is not someone who merely uses a tool well. It is someone who can connect models, APIs, data, workflows, and systems to create business value at scale.

This matters for students because it changes what readiness looks like. It is no longer enough to know how a model works. Increasingly, employers care whether you can make AI work inside real operating environments.

What employers actually look for

Employers are becoming more precise in how they evaluate AI talent. They are not just asking what candidates know. They are asking what candidates can do.

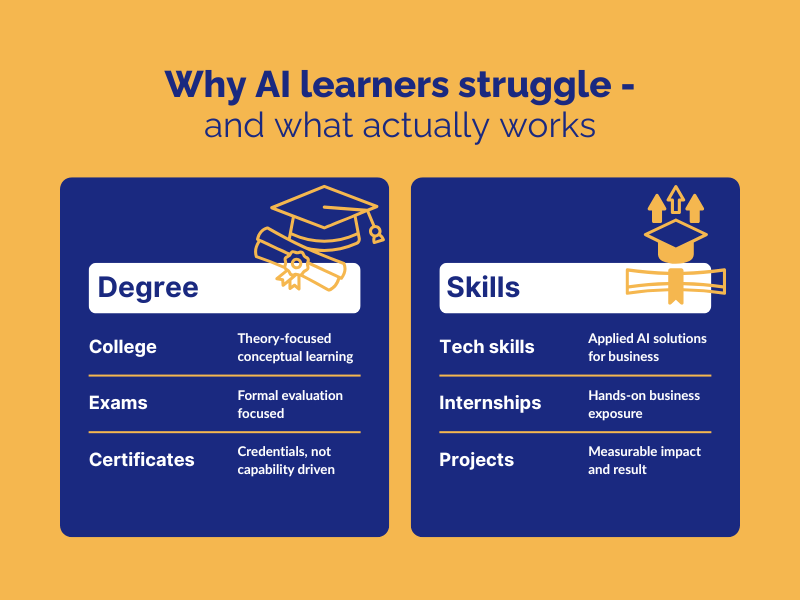

They look for evidence of problem-solving ability, hands-on project experience, workflow design, business awareness, communication, collaboration, and measurable outcomes. This is why portfolios now carry more weight than resumes alone. Projects matter more than certificates. Outcomes matter more than theoretical knowledge alone.

Students who can show how they framed a problem, selected an approach, handled imperfect data, made trade-offs, and measured impact stand out more than students who only list tools and courses.

Why traditional learning models often fall short

Traditional education still matters. Foundational knowledge matters. But many learning models have not evolved fast enough to match what enterprise AI work now demands.

The most common gaps are familiar: curricula that lag industry change, limited exposure to real-world problems, too much emphasis on assignments instead of execution, weak links between technical learning and value creation, and too little room for experimentation, iteration, and failure. Students are often taught how AI works. Employers need people who understand how AI delivers value.

Closing that gap takes more than adding another tool workshop or one more certification module. It requires a different model of learning.

What needs to change

To build enterprise-ready AI talent, learning must be applied, structured, and experiential.

Applied learning means working on use cases that resemble real business problems, not just classroom exercises. Structured learning means using frameworks to identify opportunities, define outcomes, prioritize use cases, and execute in a repeatable way. Experiential learning means having safe environments where students can test ideas, fail, refine, and improve.

A big part of that shift is giving students both a method and an environment to build real readiness. A framework such as Digital Business Innovation Methodology helps learners move from vague business problems to clearly defined use cases, priorities, and measurable outcomes. An AI Innovation Sandbox complements that by giving them a governed space to test ideas, work across realistic workflows, and refine solutions before they are expected to deliver in live enterprise settings. Together, they help close the gap between classroom learning and enterprise execution.

For students trying to move from AI awareness to real readiness, that kind of model matters. It also explains why engineering colleges are rethinking how AI education should be delivered. Institutions that want stronger employability outcomes are increasingly looking beyond theory-led instruction and toward models that combine applied learning, structured problem-solving, and hands-on experimentation.

For students, the benefit is direct: learning becomes much closer to how AI is actually applied in enterprise environments. That is also where a practice-led route such as Calibo AI Academy becomes relevant—not as another generic AI program, but as a model that is closer to enterprise use, execution, and outcomes.

What enterprise-ready AI talent finally looks like

So, what does enterprise-ready AI talent actually look like?

It looks like someone who can understand business problems deeply, identify where AI can create value, build practical end-to-end solutions, work across teams and systems, and deliver measurable impact. It is not just a technical profile. It is a problem-solving, outcome-driven mindset.

For engineering students and graduates, this is the distinction that will matter most in the next phase of the AI economy. In a market shaped by AI, employability will not depend only on whether you learned the tools. It will depend on whether you learned how to apply them with context, discipline, and impact.

AI alone is not an advantage anymore.

Being enterprise-ready with AI is.

FAQs

1. How can a student prove enterprise readiness in AI without prior industry experience?

By showcasing real-world, end-to-end AI projects that define a business problem, use imperfect data, build a solution, and show measurable outcomes. A strong portfolio that explains decisions, trade-offs, and impact can carry more weight than certifications alone.

2. How should students evaluate whether an AI learning program is truly building enterprise readiness?

Students should look for programs beyond theory and tools — with real-world use cases, structured problem-solving frameworks, and hands-on project environments. If the program helps you build solutions for realistic business scenarios, it is more likely to develop true enterprise readiness.

3. What are the key signs that a student is industry-ready for AI roles?

An industry-ready AI student can translate business problems into AI solutions, build workflows, work with real-world data, and communicate results clearly. They focus on outcomes, not just models, and prove their skills through practical projects, not just coursework.

Trending articles

Data orchestration: why modern enterprises need a data orchestration platform

Data is pouring in from myriad sources—cloud applications, IoT sensors, customer interactions, legacy databases—yet without proper coordination, much of it remains untapped potential. This is where data orchestration comes in.

How Enterprise Architects can get more support for technology led innovation

Enterprise Architects are increasingly vital as guides for technology-led innovation, but they often struggle with obstacles like siloed teams, misaligned priorities, outdated governance, and unclear strategic value. The blog outlines six core challenges—stakeholder engagement, tool selection, IT-business integration, security compliance, operational balance, and sustaining innovation—and offers a proactive roadmap: embrace a “fail fast, learn fast” mindset; align product roadmaps with enterprise architecture; build shared, modular platforms; and adopt agile governance supported by orchestration tooling.

Why combine an Internal Developer Portal and a Data Fabric Studio?

Discover how to combine Internal Developer Portal and Data Fabric for enhanced efficiency in software development and data engineering.

The differences between data mesh vs data fabric

Explore the differences of data mesh data fabric and discover how these concepts shape the evolving tech landscape.

More from Calibo

One platform, whether you’re in data or digital.

Find out more about our end-to-end enterprise solution.