Key takeaways

- AI agent adoption is accelerating, but many enterprises are still early in the shift from traditional automation pilots to outcome-led workflow transformation.

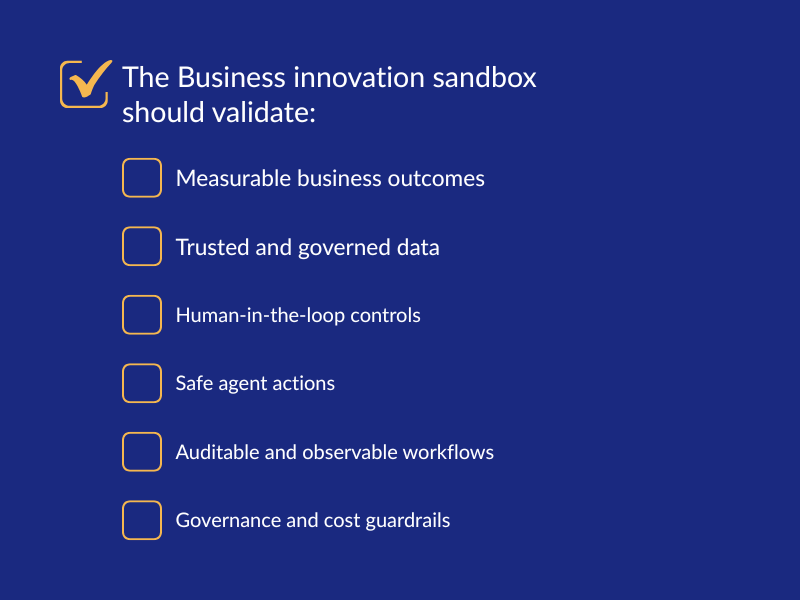

- A Business Innovation Sandbox helps teams test agentic workflows safely with governed data, clear success criteria, human oversight, and a culture of disciplined experimentation.

- A unified platform with embedded platform engineering capabilities at its foundation enables organizations to scale AI agents through reusable, governed paths from experimentation to production, connecting business outcomes, AI-ready data, and standardized delivery patterns.

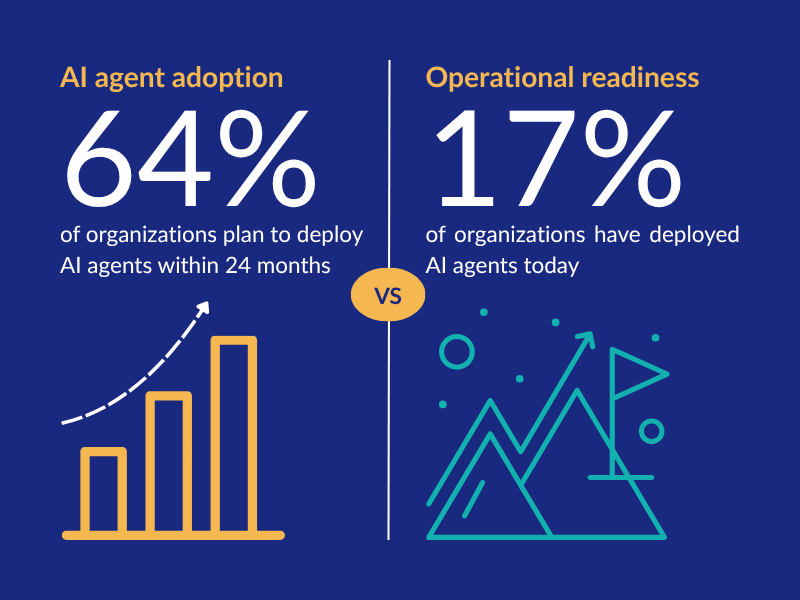

AI agents are moving quickly from executive conversation to enterprise experimentation. Gartner’s 2026 CIO and Technology Executive Survey found that only 17% of organizations had deployed AI agents at the time of the survey, while 64% expected to deploy them within the next 24 months. Gartner also notes that most enterprises are still using agents for incremental automation rather than reengineering processes for agentic AI.

That gap between adoption intent and operational readiness is where many enterprises will struggle.

For business innovation leaders, the pressure is clear: deliver visible AI progress without creating another backlog of pilots. For heads of AI, data and analytics, the challenge is equally urgent: make the data, context, and governance mature enough for AI agents to operate safely and effectively.

The next phase of agentic AI will not be won by teams that build the most POCs. It will be won by organizations that create a disciplined path from experimentation to production.

Why pilots stall before production

AI agent pilots often begin with enthusiasm and a broad ambition: automate a process or workflow, accelerate analytics, or modernize customer operations to improve productivity, increase revenue, or reduce costs. But many stall when the team moves from a controlled demo to real enterprise complexity.

- Scope grows too quickly: Teams try to solve too much at once instead of incrementally selecting and delivering one specific bite-sized, high-impact use case at a time. A broad ambition like “deploy AI agents across the enterprise” is harder to execute than a focused goal such as “reducing manual handoffs in a customer onboarding process or financial approval workflow.”

- Data readiness gaps: AI agents need more than access to data. They need trusted, well-governed, business-contextualized data. Without clear ownership, metadata, lineage, semantic consistency, and access controls, agents cannot reliably reason across workflows.

- Governance arrives too late: As agent creation becomes easier, teams can create many agents before the enterprise has a way to discover, approve, monitor, or retire them. This is how agent sprawl begins.

- Work culture remains input-first: Many teams still work in an input-first model: define a task, hand it off, wait for output. Agentic workflows require a more outcome-first model: define the business result, clarify constraints, supervise execution, and validate whether the outcome was achieved.

The Business Innovation Sandbox is not a playground

A Business Innovation Sandbox is often misunderstood as a place for unrestricted experimentation. That is only part of its value.

A well-designed sandbox provides teams a governed environment to test agentic workflows using realistic data patterns, enterprise constraints, and measurable success criteria before moving anything into production. Where policy allows, teams can extract and use minimal viable production data (with sensitive data masked) that aligns with the use case to test whether agents behave as expected against real-world complexity.

For a business innovation lead, the sandbox reduces the risk of “pilot purgatory.” It creates a structured way to test ideas quickly, validate business value, and decide what should move forward.

For a head of data and analytics, the sandbox reveals whether the data foundation is ready. It shows where definitions are inconsistent, where pipelines are brittle, where metadata is missing, and where governance controls need to be strengthened.

his is how experimentation becomes evidence-based.

Platform engineering turns experimentation into a repeatable path

The sandbox helps teams experiment, learn, and measure outcomes quickly and safely. Platform engineering foundations embedded in the sandbox help them scale.

Without platform engineering, each AI agent initiative risks becoming a one-off project with its own tools, integrations, access patterns, and governance assumptions. That slows delivery and increases risk.

Platform engineering creates the “paved roads” organizations need to move faster without bypassing control. For agentic AI, those paved roads may include reusable data pipelines, approved development environments, model and tool access patterns, role-based permissions, observability, evaluation workflows, cost controls, and deployment templates.

This matters because agent development is not the same as traditional application development. Gartner describes the agent development life cycle (ADLC) as a structured and repeatable methodology for building AI agents with safety, security, and alignment by design. Gartner also recommends engaging platform engineering teams to manage, scale, and govern the tools, platforms, context artifacts, and frameworks that support agent development.

That is the shift enterprises need. Platform engineering should not be a bottleneck. It should be the foundational operating layer that lets innovation teams and data teams move faster with guardrails already built in.

Culture matters: move from input-first to outcome-first work

Technology alone will not industrialize AI agents. Teams also need a new way of working.

In traditional automation, humans define steps and systems execute them. In agentic workflows, humans increasingly define outcomes, policies, and review points while agents help coordinate parts of the work.

That does not mean removing people. For most enterprise use cases, supervised autonomy is the right near-term model. People remain responsible for defining goals, validating outputs, handling exceptions, and approving high-impact actions.

This shift changes the talent model. Business teams need enough AI literacy to define outcomes and evaluate results. Data teams need to provide trusted context, not just raw data access. Platform teams need to make safe experimentation easy. Innovation leaders need to help teams avoid random experimentation and focus on use cases that can become reusable business capabilities.

A strong AI culture is not a culture of “try anything.” It is a culture of disciplined learning and problem solving.

A practical path for business innovation and data leaders

The most effective path from AI agent pilot to production is incremental.

Start with one high-impact workflow. Choose a use case with clear business value, manageable risk, and available or attainable data.

Map the workflow. Identify the people, systems, decisions, data sources, policies, and approval points involved.

Validate data readiness. Confirm quality, access rights, lineage, semantic definitions, and masking requirements.

Test in the Business Innovation Sandbox. Use the sandbox to evaluate agent behavior, workflow boundaries, human review points, and measurable outcomes.

Productionize through platform engineering embedded discipline and rigor. Move successful patterns onto reusable, governed platform foundations instead of rebuilding from scratch.

Repeat. Use each productionized workflow to create reusable components, lessons, and governance patterns for the next use case.

This is how enterprises move from isolated pilots to a portfolio of governed AI-enabled workflows.

How Calibo supports disciplined agentic AI innovation

Calibo helps enterprise teams accelerate governed AI adoption by making data AI-ready, unifying development with an ontology-based semantic layer, and orchestrating ML, GenAI, and agentic AI use cases with governance at scale.

For AI agent initiatives, that foundation matters. The Calibo Business Innovation Sandbox gives teams a controlled environment to prototype and validate ideas. Platform engineering capabilities help create reusable paths for provisioning, data preparation, orchestration, governance, and productionization. Calibo’s Business Innovation Methodology, can help teams break complex ambitions into manageable use cases. The Calibo AI Academy can support the cultural shift by helping teams build the skills to supervise AI execution, validate outputs, and connect agentic workflows to business outcomes.

Together, these capabilities help organizations move from tools-first experimentation to governed, business-led innovation.

From hype cycle to operating model

AI agents may be on an aggressive adoption curve, but adoption alone will not create enterprise value. The real work is building the operating model around them.

Business innovation leaders need a way to move ideas from sandbox to production. Head of data and analytics must engage business teams to create use case aligned AI-ready data that is trustworthy. Platform engineering teams need to provide the paved roads that make safe scale possible.

The enterprises that succeed with agentic AI will not be the ones that chase every new tool. They will be the ones that combine experimentation with discipline, culture with governance, and innovation with a production-ready platform foundation.

FAQs

What is the role of the Calibo Business Innovation Sandbox in agentic AI?

Calibo Business Innovation Sandbox provides a governed environment to prototype, test, and validate AI agent workflows before production. It helps evaluate data readiness, agent behavior, human review points, and measurable outcomes in a controlled setting.

Why does platform engineering matter for building of AI agents?

Platform engineering matters because AI agents require governed foundations, not experimentation space. In the Business Innovation Sandbox, built-in capabilities such as self-service provisioning, role-based access, orchestration, integration, and guardrails enable platform engineering practices from the start. This helps teams prototype agents safely, validate outcomes, and establish repeatable delivery patterns.

How can enterprises prevent AI agent pilots from getting stuck?

Teams should start with one focused business outcome, validate whether the required data is ready, test the workflow in a governed sandbox, and define clear human review points. Successful patterns can then be productionized, helping enterprises move from input-first task execution to outcome-first supervision.

Trending articles

Data orchestration: why modern enterprises need a data orchestration platform

Data is pouring in from myriad sources—cloud applications, IoT sensors, customer interactions, legacy databases—yet without proper coordination, much of it remains untapped potential. This is where data orchestration comes in.

How Enterprise Architects can get more support for technology led innovation

Enterprise Architects are increasingly vital as guides for technology-led innovation, but they often struggle with obstacles like siloed teams, misaligned priorities, outdated governance, and unclear strategic value. The blog outlines six core challenges—stakeholder engagement, tool selection, IT-business integration, security compliance, operational balance, and sustaining innovation—and offers a proactive roadmap: embrace a “fail fast, learn fast” mindset; align product roadmaps with enterprise architecture; build shared, modular platforms; and adopt agile governance supported by orchestration tooling.

Why combine an Internal Developer Portal and a Data Fabric Studio?

Discover how to combine Internal Developer Portal and Data Fabric for enhanced efficiency in software development and data engineering.

The differences between data mesh vs data fabric

Explore the differences of data mesh data fabric and discover how these concepts shape the evolving tech landscape.

More from Calibo

One platform, whether you’re in data or digital.

Find out more about our end-to-end enterprise solution.