By Sanjeev Singh, Head of Product Management, Calibo

Why most enterprises stall between AI pilots and production—and what a governed data foundation change.

There’s a conversation happening in almost every enterprise boardroom right now:

We’ve invested in the cloud. We’ve got the AI tools. We’ve run the pilots. Why isn’t any of this scaling?

It’s a frustrating place to be—especially when you’ve done everything right on paper. The models are good. The tooling is solid. The use cases are compelling. And yet, somewhere between the pilot and production, things fall apart.

Here’s the uncomfortable truth most technology vendors won’t tell you: the bottleneck isn’t the AI. It’s the data underneath it.

The problem no one wants to own

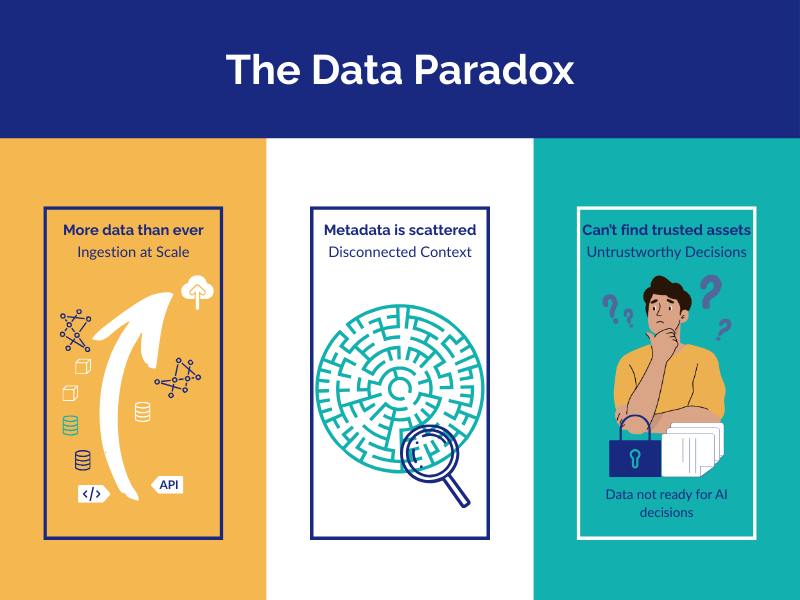

Most enterprises are sitting on a paradox. They have more data than ever —warehouses, lakes, streams, APIs, SaaS platforms all generating signal at scale. Yet very little of that data is actually ready to be used meaningfully by the business, let alone by an AI agent making autonomous decisions.

Governance is manual and inconsistent. Metadata is scattered. Business users can’t find or trust what they need. And the critical knowledge about what data means—its context, lineage, and relationships—is locked inside the heads of a handful of overworked data engineers.

When you introduce agentic AI into this environment, the problem doesn’t stay manageable. It multiplies.

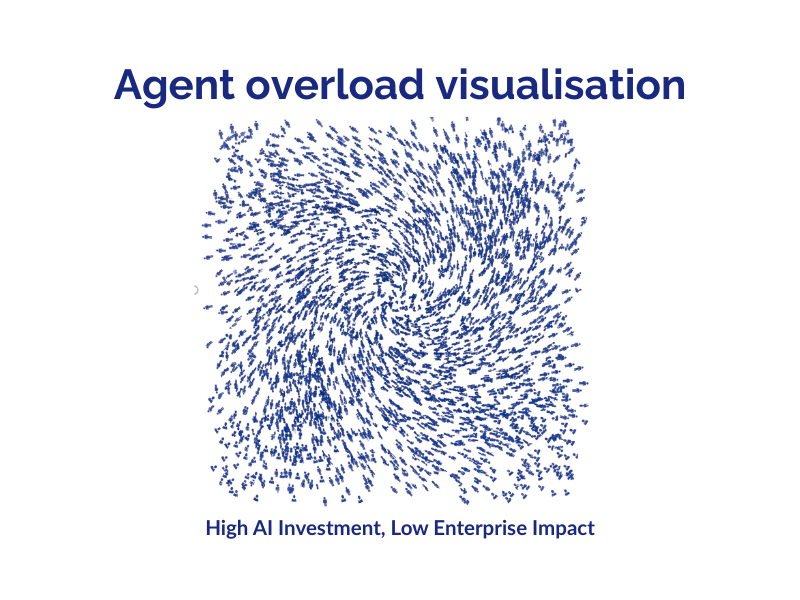

Picture a mid-sized enterprise running thousands of AI agents simultaneously—each one making decisions that affect revenue, compliance, and customer experience. Now picture those agents pulling from fragmented, ungoverned, inconsistently defined data. Each agent develops its own partial view of the business. Decisions conflict. Outcomes are non-explainable. Compliance teams can’t audit a thing. Business trust erodes.

The result is predictable: high AI investment and low enterprise impact.

The enterprises that will win the agentic era won’t be the ones with the most sophisticated models. They’ll be the ones with the best data assets and governance. That’s the race that matters—and most organizations haven’t even started running it yet.

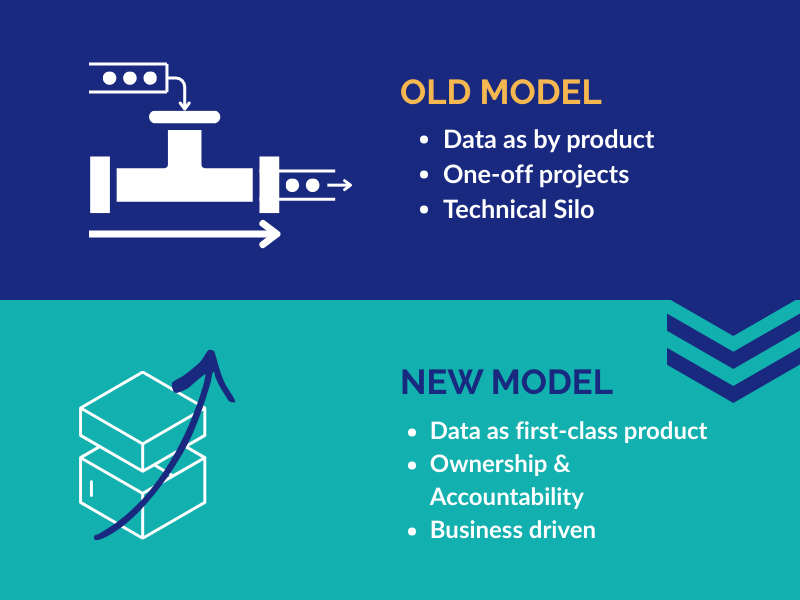

Data needs to be treated like a product, not a pipeline

For decades, the industry treated data as a byproduct—something created along the way as systems run, transactions process, and users interact. Data pipelines were built as one-off projects, owned by technical teams, and largely invisible to the business.

That model is broken.

At Calibo, we believe data must be treated as a first-class product—with ownership, accountability, quality standards, and business intent baked in from day one. Not something that gets cleaned up after the fact. Not something that lives in a technical silo waiting to be requested. A product that business teams can discover, trust, and use to make decisions and drive innovation.

This shift in thinking changes everything about how you build and scale your data ecosystem.

What Calibo actually does

Calibo is a capability-first data sandbox layer—and that word “sandbox” matters. We don’t come in and replace your existing stack. We don’t ask you to rip out Snowflake or Databricks or your cloud data warehouse. We sit alongside your investments and do something those tools weren’t designed to do: transform raw data into governed, discoverable, reusable, AI-ready Data Assets.

Think of it as adding intelligence and control to infrastructure you already have. Calibo is cloud-agnostic, tool-aware, and built to integrate with enterprise ecosystems. What it adds is the connective tissue—the semantic layer, lineage, quality controls, and governance automation—that turns data from raw signal into something the business can actually act on.

And this isn’t a new idea, we’re still figuring out. Calibo’s journey started over a decade ago. Eight of those years have been spent building real-world enterprise maturityacross regulated industries like financial services, healthcare, manufacturing, and retail. What customers get isn’t a clever concept or a beta product—it’s a refined methodology and a battle-tested sandbox built from hard-won experience.

The approach:

start small, scale fast, don’t break anything

One of the most common failure patterns in data transformation is starting too big: massive multi-year programs with sweeping architectural mandates that never quite deliver, cost far more than expected, and leave business teams just as dependent on data engineers as they were before.

Calibo’s methodology flips this model.

We start with business instincts, not technology requirements. Instead of asking “what does your data architecture look like?”, we ask “what decisions do you want to make better?” From there, we define bite-sized, high-impact use cases and capture them as user stories—not data specs. Business teams lead; technical teams enable.

Each use case is delivered as an experience-driven data asset. Not a report. Not a dashboard. A curated, documented, quality-checked, AI-ready product that business users can actually experience—often within weeks, not months.

We call the underlying philosophy Minimum Viable Data (MVD): identify the smallest slice of data that delivers real value, apply governance and quality from day one, and let each iteration build on—rather than restart—the last. Progress compounds. Momentum builds.

What makes this work is the Calibo sandbox itself. Innovation happens in a governed environment within your own infrastructure—no disruption to existing transactional systems, full enforcement of security, privacy, compliance, and quality standards. Once something is proven, it flows into your operational systems: ERP, CRM, supply chain platforms, and digital channels. As you scale, you never trade speed for consistency, or innovation for compliance. You get both.

What’s under the hood

For those who want to understand what this looks like technically, Calibo delivers across five core areas:

- Data Assets as certified products: every dataset is named, documented, quality-checked, and catalogued—reusable across teams and regions, not rebuilt from scratch for every project.

- Embedded data quality and observability: profiling, validation, drift detection, and anomaly alerts are built directly into pipelines—not bolted on aftersomething breaks.

- A semantic layer that gives data a brain: Calibo’s Data Semantic Layer (DSL) builds a knowledge graph and ontology around your data, capturing business meaning, lineage, and relationships— so humans and AI systems can understand and reason over your datawithout relying on the same three people who’ve been here since 2014.

- AI-ready data by design: standardized training and inference datasets, integrated feature engineering workflows, support for RAG models on proprietary enterprise data, and policy-driven governance of LLM usage. Organizations stay model-agnostic while maintaining full control.

- Explainability built into the asset, not added on top: because lineage, quality metrics, and metadata are properties of the Data Asset itself, AI-assisted decisions can be traced, explained, and audited. That’s not just good practice—it’s increasingly a regulatory requirement.

What customers achieve

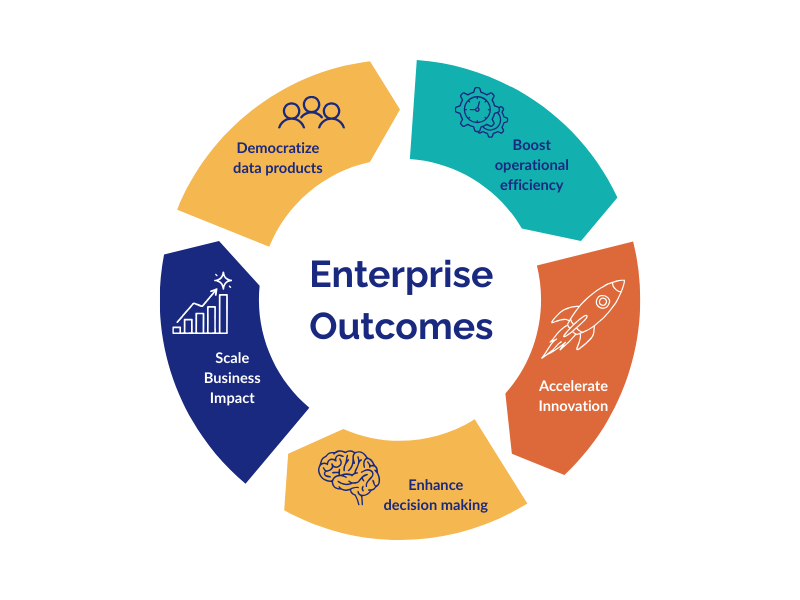

Enterprises that adopt this approach typically see outcomes in five areas:

- Democratize data products:—business teams gain self-service access to trusted data withoutfiling tickets for every request.

- Establish a real single source of truth—harmonized, governed data across systems and regions that teams can trust and build on.

- Accelerate AI initiatives—not by running more experiments, but by finally having the data foundation that makes production AI viable.

- Reduce operational costs:—eliminating redundant pipelines, managing data lifecycle intelligently, and reducing the sprawl that accumulates in every large data estate.

- Position data as a revenue-generating asset—share governed, reusable data products internally or externally in ways that create competitive advantage.

Why the timing matters

Agentic AI is no longer a future consideration. Enterprises are deploying autonomous agents now. These agents need consistent, governed, trustworthy data to operate effectively at scale. Without it, they’ll deliver inconsistent outcomes, create compliance exposure, and erode trust faster than they create value.

The window to build the right data foundation isn’t infinite. Organizations that treat this as urgent—that start now, start small, and build incrementally toward a governed data product ecosystem—will compound AI value over time. The others will keep running pilots.

The model isn’t what’s holding you back. It never was.

Ready to move from pilots to production?

Calibo enables enterprises to innovate boldly—and operate responsibly. If your AI initiatives are stuck between pilot and production, let’s talk about what a governed data sandbox can do for your organization.

Topics

Trending articles

Data orchestration: why modern enterprises need a data orchestration platform

Data is pouring in from myriad sources—cloud applications, IoT sensors, customer interactions, legacy databases—yet without proper coordination, much of it remains untapped potential. This is where data orchestration comes in.

How Enterprise Architects can get more support for technology led innovation

Enterprise Architects are increasingly vital as guides for technology-led innovation, but they often struggle with obstacles like siloed teams, misaligned priorities, outdated governance, and unclear strategic value. The blog outlines six core challenges—stakeholder engagement, tool selection, IT-business integration, security compliance, operational balance, and sustaining innovation—and offers a proactive roadmap: embrace a “fail fast, learn fast” mindset; align product roadmaps with enterprise architecture; build shared, modular platforms; and adopt agile governance supported by orchestration tooling.

Why combine an Internal Developer Portal and a Data Fabric Studio?

Discover how to combine Internal Developer Portal and Data Fabric for enhanced efficiency in software development and data engineering.

The differences between data mesh vs data fabric

Explore the differences of data mesh data fabric and discover how these concepts shape the evolving tech landscape.

More from Calibo

One platform, whether you’re in data or digital.

Find out more about our end-to-end enterprise solution.